Adaptive Multi-View Live Video Streaming for Teledriving Using a Single Hardware Encoder

Dec 2, 2020· ,,,·

0 min read

,,,·

0 min read

Markus Hofbauer

Christopher Kuhn

Goran Petrovic

Eckehard Steinbach

Image credit: IEEE

Image credit: IEEEAbstract

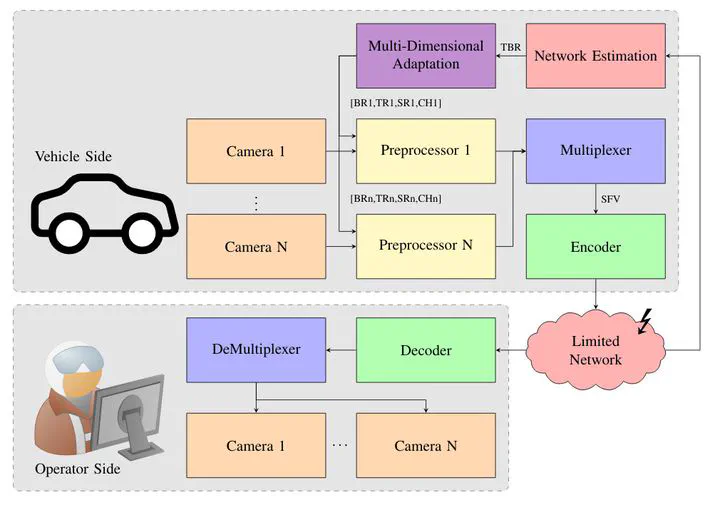

Teleoperated driving (TOD) is a possible solution to cope with failures of autonomous vehicles. In TOD, the human operator perceives the traffic situation via video streams of multiple cameras from a remote location. Adaptation mechanisms are needed in order to match the available transmission resources and provide the operator with the best possible situation awareness. This includes the adjustment of individual camera video streams according to the current traffic situation. The limited video encoding hardware in vehicles requires the combination of individual camera frames into a larger superframe video. While this enables the encoding of multiple camera views with a single encoder, it does not allow for rate/quality adaptation of the individual views. To this end, we propose a novel concept that uses preprocessing filters to enable individual rate/quality adaptations in the superframe video. The proposed preprocessing filters allow for the usage of existing multidimensional adaptation models in the same way as for individual video streams using multiple encoders. Our experiments confirm that the proposed concept is able to control the spatial, temporal and quality resolution of individual segments in the superframe video. Additionally, we demonstrate the usability of the proposed method by applying it in a multi-view teledriving scenario. We compare our approach to individually encoded video streams and a multiplexing solution without preprocessing. The results show that the proposed approach produces bitrates for the individual video streams which are comparable to the bitrates achieved with separate encoders. While achieving a similar bitrate for the most important views, our approach requires a total bitrate that is 40% smaller compared to the multiplexing approach without preprocessing.

Type

Publication

In 22nd IEEE International Symposium on Multimedia