Reverse Error Modeling for Improved Semantic Segmentation

Oct 16, 2022· ,,·

0 min read

,,·

0 min read

Christopher Kuhn

Markus Hofbauer

Goran Petrovic

Eckehard Steinbach

Image credit: IEEE

Image credit: IEEEAbstract

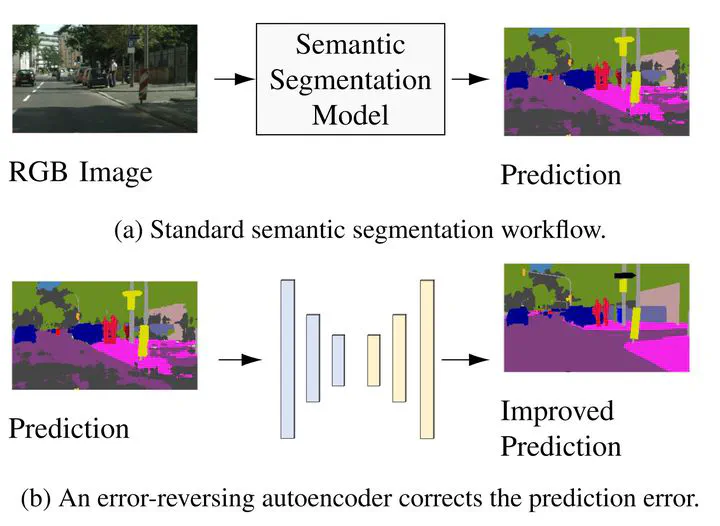

We propose the concept of error-reversing autoencoders (ERA) for correcting pixel-wise errors made by an arbitrary semantic segmentation model. For this, we reframe the segmentation model as an error function applied to the ground truth labels. Then, we train an autoencoder to reverse this error function. During testing, the autoencoder reverses the approximated error function to correct the classification errors. We consider two sources of errors. First, we target the errors made by a model despite having being trained with clean, accurately labeled images. In this case, our proposed approach achieves an improvement of around 1% on the Cityscapes data set with the state-of-the-art DeepLabV3+ model. Second, we target errors introduced by compromised images. With JPEG-compressed images as input, our approach improves the segmentation performance by over 70% for high levels of compression. The proposed architecture is simple to implement, fast to train and can be applied to any semantic segmentation model as a post-processing step.

Type

Publication

In 29th IEEE International Conference on Image Processing